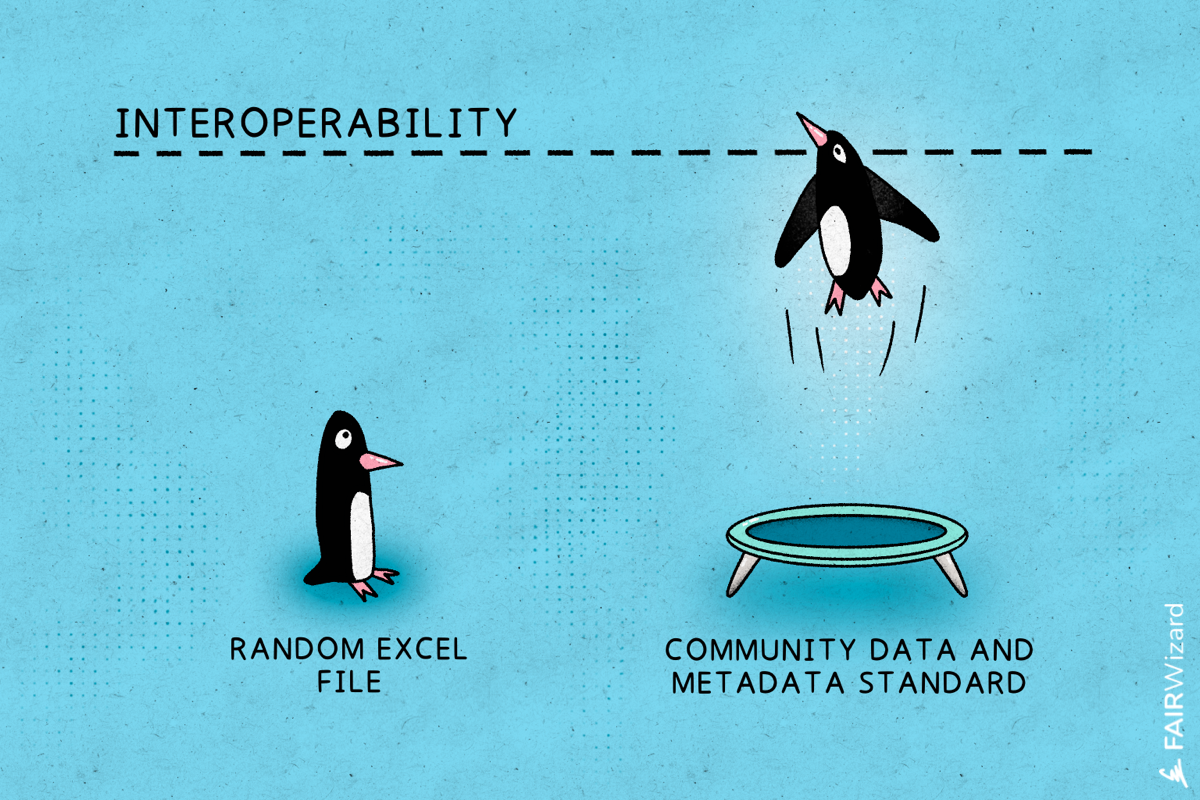

Interoperability is one of the defining challenges in modern research data management. Institutions generate large volumes of data across projects, disciplines, and collaborations, yet too often that data remains isolated within individual systems. Without shared standards, structured metadata, and machine-accessible interfaces, even well-managed datasets struggle to deliver their full scientific and strategic value.

Interoperability is central to both FAIR and Open Science. It ensures that research data can be integrated with other datasets, interpreted correctly by machines, and reused beyond its original context. When interoperability is missing, reporting becomes manual, collaboration becomes fragmented, and long-term reuse becomes uncertain.

In this environment, Data Management Planning is no longer just documentation. It is infrastructure.

Interoperability begins with standards. Metadata standards such as Dublin Core or DataCite define how datasets are described, cited, and linked. Domain-specific schemas add disciplinary precision, ensuring that scientific context is preserved.

Persistent identifiers, including DOIs for datasets and ORCID iDs for researchers, further strengthen interoperability within Research Data Management ecosystems. They create stable, machine-readable links between projects, people, publications, and institutions. These identifiers are not merely administrative tools. They are the backbone of discoverability, attribution, and integration.

Controlled vocabularies and ontologies also play a critical role. Free-text descriptions limit automation and integration. Structured terminology enables systems to interpret meaning consistently, making cross-project and cross-institutional analysis possible.

Adopting standards is not only about compliance with funder requirements. It ensures that research outputs, DMP records, and metadata remain usable, integrable, and visible over time.

A key shift in research data management is moving from static documents to structured metadata models. Traditional Data Management Plans (DMPs) were often written as narrative text. While informative, they were difficult to reuse programmatically.

Machine-actionable DMPs change this dynamic. By representing projects, datasets, storage plans, ethical considerations, and preservation strategies as structured entities, the DMP becomes data in its own right.

This enables:

Structured metadata models allow institutions to monitor how data is managed across projects, track FAIR-related indicators, and generate consistent outputs for funders.

Instead of manually compiling information from multiple documents, data can be queried directly and integrated into governance and reporting systems.

This transformation turns data management planning from an isolated requirement into part of a connected digital infrastructure.

Standards and metadata models provide structure. APIs make that structure operational.

Through REST APIs and similar interfaces, research data management systems can exchange information with grant platforms, repositories, analytics tools, and institutional systems. A project registered in one system can automatically trigger the creation of a structured DMP record. Updates to datasets can be reflected in reporting dashboards without manual intervention.

APIs enable programmatic access to:

When everything that can be done in a user interface can also be done through an API, data management becomes scalable. This is particularly important for institutions managing hundreds or thousands of projects simultaneously.

Interoperability should not be viewed as a technical add-on. It is a strategic institutional capability.

Research-performing organizations increasingly operate within complex digital ecosystems. Funding bodies demand structured reporting. Open Science policies require transparency. FAIR mandates expect machine-readability. In this context, interoperable Data Management Planning systems reduce risk and improve governance.

Without interoperable Research Data Management infrastructure, organizations rely on spreadsheets, email exchanges, and manual reconciliation of DMP information. This increases administrative burden and reduces data quality.

With interoperable systems built on standards, structured metadata, Machine-actionable DMPs, and APIs, institutions gain:

Greater transparency in research data practices

Improved compliance with funder mandates

Reduced operational overhead

Stronger FAIR alignment

Scalable Open Science implementation

Interoperability directly underpins findability, accessibility, interoperability, and reusability. Structured DMP data and standardized interfaces allow both machines and humans to locate, access, combine, and reuse research outputs effectively.

Interoperability in research data depends on deliberate choices around standards, metadata models, and APIs. Together, these elements transform Data Management Planning from a static compliance exercise into a connected, machine-actionable component of research infrastructure.

For institutions committed to FAIR and Open Science, investing in interoperable data management is not optional. It is foundational to building resilient, scalable, and future-ready research environments.

Interoperability in Research Data Management refers to the ability of systems, datasets, and Data Management Plans (DMPs) to exchange and interpret information consistently across platforms. It relies on shared metadata standards, persistent identifiers, structured data models, and APIs.

APIs enable Machine-actionable DMP workflows by allowing systems to exchange DMP data automatically. They reduce manual reporting, synchronize updates across platforms, and support scalable Data Management Planning across institutions.

Metadata standards ensure that research data and DMP records are described consistently and can be interpreted by both humans and machines. This supports FAIR principles, enhances discoverability, and enables integration across repositories and research infrastructures.